|

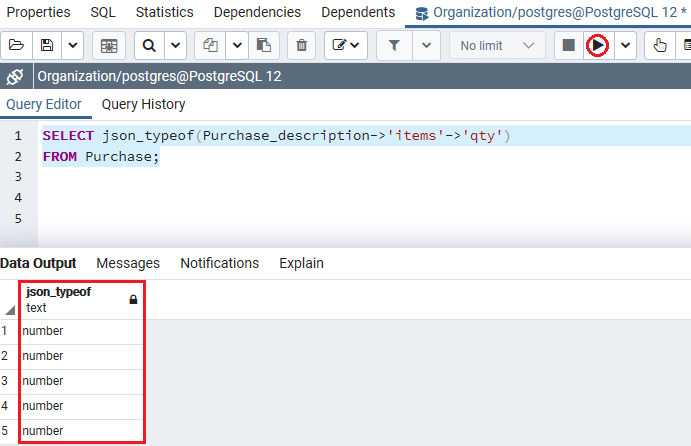

It is now possible to extract / unnest specific keys from a list of objects using jsonb path queries, so long as the field queried is jsonb and not. COUNT (DISTINCT (y.x::jsonb)->'name') updated answer for postgresql versions 12+. There are only 4 distinct values in the data currently, and I don't expect to have many more. JSONB supports the equality comparison, thus it is possible to use COUNT DISTINCT along with extraction via ->, i.e. That's about 8 minutes to filter! Did I do something wrong here, or is this the consequence of PostgreSQL not keeping statistics on JSON(B) objects? It should be possible to flatten out this data column, but I'd like to be sure that's what I need to do before I start working on it.Įdit: these array lengths do not vary much. you can build both JSON arrays and objects without using aggregates as long as the size of each is fixed: WHERE dimensions > jsonbbuildarray (jsonbbuildobject (,),jsonbbuildobject (,)) But maybe you should be using some C++ library to build the JSON and pass it already formed down to PostgreSQL. A row in PostgreSQL is represented as a tuple.

select (jsonbarrayelements (transaction->'transactions')->'id')::text as trxid from transactions SQL alchemy returns a list of tuples. > Seq Scan on table (cost=256.19 rows=12159794 width=8)įilter: (jsonb_array_length((table.data -> 'items'::text)) = 2) You can write the query simpler without CTE which probably would perform better. I created an index on the length of the "items" element: CREATE INDEX n_items ON table ( JSONB_ARRAY_LENGTH(data->'items') )īut when I filter, I still get a sequential scan when I try to filter on it: EXPLAIN ANALYZE SELECT COUNT(*) FROM table WHERE JSONB_ARRAY_LENGTH(table.data->'items') = 2 Īggregate (cost=25656655.68 rows=1 width=8)

I have a table table with a JSONB field data, which contains a variable-length array, e.g.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed